How we made historical blockchain data backfills 12x faster

Improving Turbo pipeline backfills with Apache Arrow • 6 mins

Chief Technology Officer

Last updated: March 2026

tl;dr: Understanding where your data physically lives and what format it's in gets you more throughput from the same resources. We got 12x faster historical backfills in Turbo Pipelines by reading co-located ClickHouse data as native Arrow instead of consuming Avro through Kafka: ~600,000 rows/s vs ~50,000 rows/s on our densest dataset, with no additional infrastructure cost. All benchmarks are on the smallest pipeline size possible. In production, throughput scales up linearly with different sizes (up to 20x on our self-serve tier).

The hard problem: backfills and real-time in one pipeline

Goldsky processes real-time blockchain data across 150+ chains . Our customers generally fall into two camps when it comes to historical data:

- "Give me everything." Analytics teams, indexers, and data platforms that need every row from genesis. They're backfilling billions of rows and want it done in hours, not days.

- "Give me a slice." App developers tracking a specific contract or event. They need selective, filtered data, but they still need it from block zero.

Both need historical data as fast as possible and real-time events as new blocks land, from a single pipeline.

These are fundamentally different access patterns. Real-time streaming is a firehose of individual events, and Kafka (we use WarpStream) excels at this. Historically, we used Kafka for all backfills too. Kafka is well-suited for bulk sequential reads and it kept load off our data lake. But it meant every message had to be individually deserialized from Avro, and scaling Kafka consumption for large backfills required expensive autoscaling infrastructure.

We built the hybrid source architecture in Turbo to address this for the "give me a slice" customers first. The idea: read historical data directly from our ClickHouse data lake via fast-scan, then flip to WarpStream once the pipeline catches up to the chain tip. For selective backfills with filters, this was already working well.

Existing fast-scan: already fast for selective backfills

The hybrid source's fast-scan was optimized for the "give me a slice" customers: filtering on a contract address or event type. For these sparse, selective backfills, fast-scan can rip through an entire chain in under 20 seconds.

But for the "give me everything" customers, we were still using Kafka. This scaled very well since you can have as many parallel workers as you have Kafka partitions, allowing us to have up to 2 million records per second. However, you would be just throwing compute at the problem.

Benchmark setup

We ran three scenarios against Base traces, one of our densest datasets (wide rows, massive volume). All tests used:

- Pipeline size:

s(our smallest allocation) - Sink: blackhole (isolating source throughput from sink write speed)

- Filter: full dump (every row in the dataset)

- Engine: Turbo (our Rust-based streaming engine using Apache Arrow and DataFusion)

Results: Kafka vs bloom-filter vs partition-based pagination

| Scenario | Source | Throughput | vs. Kafka |

|---|---|---|---|

| Kafka baseline | WarpStream (Avro) | ~50K rows/s | 1x |

| Bloom-filter scan | ClickHouse (Arrow) | ~350K rows/s | ~7x |

| Partition-based scan | ClickHouse (Arrow) | ~600K rows/s | ~12x |

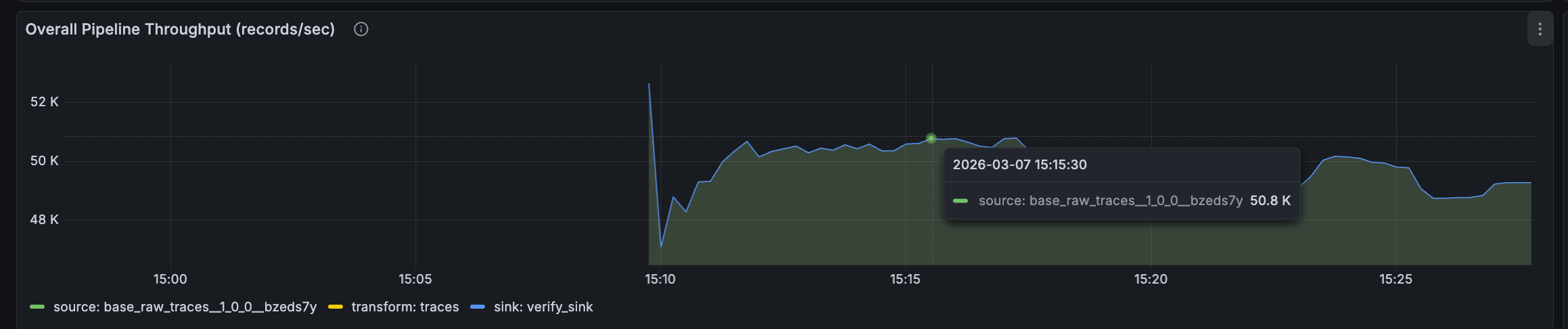

Scenario 1: Kafka consumption baseline, ~50K rows/s

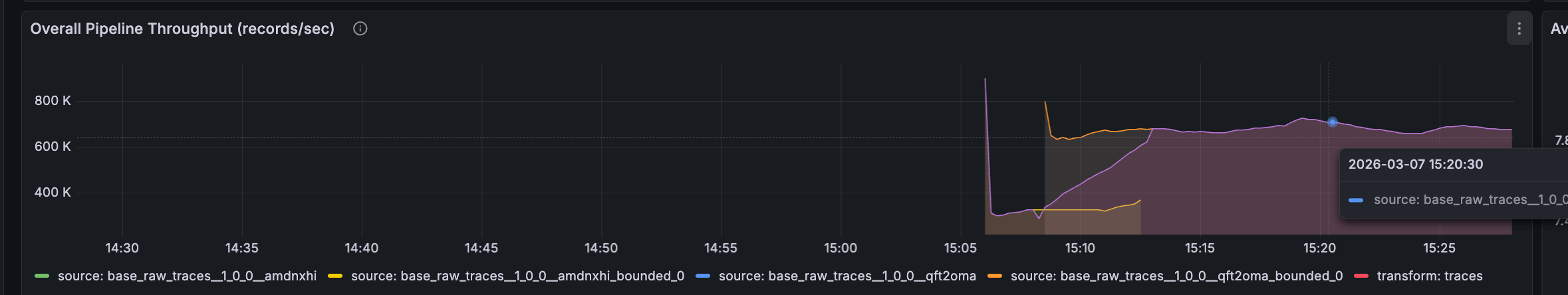

Scenario 2: Arrow with bloom-filter pagination, ~350K rows/s

Scenario 3: Arrow with partition-based pagination, ~600K rows/s

The Arrow format accounts for the bulk of the improvement (7x) and the pagination strategy adds another 1.7x on top.

All benchmarks were run on our free-tier pipeline size, a small. Each pipeline is capped at 1 vcpu with 1 parallelism.

With Kafka-based ingest, throughput wasn't necessarily the bottleneck. Scaling Kafka consumption required expensive infrastructure and a pretty fancy autoscaling setup. With the Arrow-from-ClickHouse path, throughput is so high on a single small worker that many full-chain backfills complete without needing to scale at all.

Multiple pipeline workers scale throughput linearly as well. The partition-based pagination scheme is naturally parallelizable, with different workers fetching non-overlapping ranges concurrently. We can scale cheaply, but now we mostly don't have to.

Why Arrow from ClickHouse is so fast

The Arrow-from-ClickHouse path is absurdly efficient. When Turbo consumes from Kafka, every message is Avro-encoded and requires per-message deserialization. For a wide dataset like Base traces multiplied by billions of rows, that CPU cost adds up.

The ClickHouse path eliminates this entirely. Data arrives as native Apache Arrow record batches, the same columnar format Turbo's execution engine (DataFusion) operates on internally. There's no deserialization step. Data flows straight from source into transform and sink stages.

Columnar compression makes the wire transfer compact too. Similar values in the same column cluster together and compress well. A billion-row result set as Arrow is surprisingly small on the wire compared to equivalent Avro messages.

Bloom-filter vs partition-based pagination

The existing fast-scan uses bloom-filter reliant pagination. Intuitively, bloom filters are much faster. ClickHouse can use them to skip granules that definitely don't contain matching rows, which is why selective queries (filtering on a contract address) can blast through an entire chain in seconds. For the "give me a slice" customer, this is the right approach.

But when a chain is huge and the data is wide and dense, the physical mechanics change. Bloom filters don't help when nearly every granule contains matching data. On dense datasets, bloom filter queries were causing excessive CPU usage on ClickHouse and frequent timeouts from the database. Page sizes had to stay small to avoid those timeouts, which meant lots of round trips for bulk dumps. This is why we hadn't used the bloom-filter strategy for dense backfills before.

The new approach uses partition-based pagination tied directly to ClickHouse's physical data layout. Fetch blocks 0–1M, then 1M–2M, and so on, with backoff if a range is too large. Each query maps to a known set of partitions.

The tradeoff is that partition-based scanning is slower for very sparse data (minutes vs seconds with bloom filters). But for dense backfills:

- Strongly bounded CPU usage. Each query targets a known partition range, so ClickHouse only touches the partitions it needs and CPU usage stays predictable.

- Massive page sizes. We can safely set page sizes to 10M rows because each query is bounded to a known partition range. Combined with Arrow's efficient columnar transfer, bulk data moves fast.

Napkin math: what 12x faster backfills mean in practice

Backfilling 10 billion rows of Base traces on our smallest pipeline size:

- Kafka (50K rows/s): ~55 hours to backfill. More than two full days.

- Arrow fast-scan (600K rows/s): ~4.6 hours. You can start before lunch and be caught up by the end of day. On a single small worker. Scale up and it gets proportionally faster.

Caveats

- Blackhole sinks. We're measuring pure source throughput. Real pipelines write to Postgres, ClickHouse, S3, etc., each with their own ceilings.

- Dense dataset. Sparser, selective workloads should use existing fast-scan, which is already sub-20-second for full chain scans with filters.

What's next for fast-scan on Turbo

- Parallel block-range fetching across concurrent workers, even for small pipelines.

- Broader rollout across more chains (view supported networks)

None of this required new infrastructure or a bigger budget. It required overfitting to the colocation and data format characteristics of what we already had and building a pipeline that takes advantage of both.

We're shipping these improvements as part of Turbo Pipelines , currently in beta. If you're building on blockchain data and spending too long waiting for backfills, give it a try.